Running and Scaling a FastAPI ML Inference Server with Kubernetes

A guide on scaling your model's inference capabilities.

Running and scaling Machine Learning models is a complex problem that requires consulting and experimenting with lots of solutions.

In this tutorial, let’s look at a way to make the process easier with less moving parts using the following tools:

FastAPI to build our inference API, and

Ray Serve to make our API automagically scalable on a locally running Kubernetes cluster.

Let’s get started!

Building a Face Detection model

We’ll be using the open source Deepface library to perform face detection and extraction on a given image.

A simple function called detect_face will take an image URL as a parameter and:

download the image using

requestsread the image using

PILconvert the image into a

numpyarray and finally,Perform face detection on the image

We’ll get an output consisting of the coordinates of the faces detected in the given image.

Sounds sweet and simple? Here’s the implementation:

def detect_face(self, img_path: str):

# Run inference

response = requests.get(img_path, stream=True)

if response.status_code == 200:

image_bytes = BytesIO(response.content)

image_pil = Image.open(image_bytes)

img_np = np.array(image_pil)

response = DeepFace.extract_faces(img_path=img_np, detector_backend="mtcnn")

self.logger.info("Output done.")

output = []

for index, face in enumerate(response):

output.append(response[index]['facial_area'])

return outputBuilding the Inference API using FastAPI and Ray

Ray has a fantastic integration with FastAPI that allows us to use a few decorators to automatically scale the API:

from io import BytesIO

from fastapi.responses import JSONResponse

from deepface import DeepFace

from fastapi import FastAPI

import numpy as np

from PIL import Image

from ray import serve

import requests

from logger import Logger

app = FastAPI()

@serve.deployment(num_replicas=2, ray_actor_options={"num_cpus": 0.5, "num_gpus": 0})

@serve.ingress(app)

class FaceDetector:

def __init__(self):

# Load model

self.logger = Logger(module="FaceDetector")

self.logger.info("Initialized model.")

@app.post("/detect-face")

def detect_face(self, img_path: str) -> JSONResponse:

# Run inference

response = requests.get(img_path, stream=True)

if response.status_code == 200:

image_bytes = BytesIO(response.content)

image_pil = Image.open(image_bytes)

img_np = np.array(image_pil)

response = DeepFace.extract_faces(img_path=img_np, detector_backend="mtcnn")

self.logger.info("Output done.")

output = []

for index, face in enumerate(response):

output.append(response[index]['facial_area'])

return JSONResponse(output)

return JSONResponse({"Error": "Internal Server Error 500"})

facedetector_app = FaceDetector.bind()Here are the changes involved in converting our simple inference function into an API:

we return a

JSONResponseof the list of dictionaries of detected faces instead of theoutputlist itselfOur response will look like the following: [{“x”:294,”y”:55,”w”:73,”h”:96}, {“x”:194,”y”:525,”w”:833,”h”:86}]

We wrap the inference function in a

/detect-facerouteWe use the

@serve.deploymentdecorator to define the number of replicas the API we want to run for scale: here we use 2 and with a maximum CPU and GPU allotment of 0.5 and 0 respectively.The

bind()function makes sure that the deployment object (our one or more inference functions) is built into an application that can be run usingserve.runray command.The logging setup is available in the GitHub repo of this project. It’s not really necessary for this tutorial at large.

The Docker Setup

Make sure that Docker is running on your machine and has at least 6GB of memory and 4 CPUs provided for consumption.

Now install kubetcl, Helm, and Kind.

We’ll need almost all of it as we begin to make a new cluster and deploy our app.

The first thing we want to do is make a new Kind cluster:

$ kind create clusterNext, we want to use Helm to install kuberay-operator:

$ helm repo add kuberay https://ray-project.github.io/kuberay-helm/

$ helm repo update

$ helm install kuberay-operator kuberay/kuberay-operator --version 1.0.0Make sure you can see the kuberay-operator pod is running using the kubectl command: kubetcl get pods :

# NAME READY STATUS RESTARTS AGE

# kuberay-operator-7fbdbf8c89-pt8bk 1/1 Running 0 3sRunning a new Ray Service

Like we do with a custom Docker Compose deployment, we need a YAML config that defines a few things:

our application working directories and packages

resources to create and

ports to run on

We do this in a new serve_config.yaml file. A great example is available in Ray’s repository here, however, here’s the modified version for our particular use case:

apiVersion: ray.io/v1alpha1

kind: RayService

metadata:

name: rayservice-facedetectorapp

spec:

serviceUnhealthySecondThreshold: 900 # Config for the health check threshold for Ray Serve applications. Default value is 900.

deploymentUnhealthySecondThreshold: 300 # Config for the health check threshold for Ray dashboard agent. Default value is 300.

serveConfigV2: |

proxy_location: EveryNode

http_options:

host: 0.0.0.0

port: 8000

applications:

- name: app1

route_prefix: /

import_path: model:facedetector_app

runtime_env:

working_dir: "https://github.com/yashprakash13/face-detector-app/archive/master.zip"

pip: ["deepface"]

rayClusterConfig:

rayVersion: "2.6.3" # should match the Ray version in the image of the containers

######################headGroupSpecs#################################

# Ray head pod template.

headGroupSpec:

# The `rayStartParams` are used to configure the `ray start` command.

# See https://github.com/ray-project/kuberay/blob/master/docs/guidance/rayStartParams.md for the default settings of `rayStartParams` in KubeRay.

# See https://docs.ray.io/en/latest/cluster/cli.html#ray-start for all available options in `rayStartParams`.

rayStartParams:

dashboard-host: "0.0.0.0"

#pod template

template:

spec:

containers:

- name: ray-head

image: rayproject/ray-ml:2.6.3

resources:

limits:

cpu: 1

memory: 2Gi

requests:

cpu: 1

memory: 2Gi

ports:

- containerPort: 6379

name: gcs-server

- containerPort: 8265 # Ray dashboard

name: dashboard

- containerPort: 10001

name: client

- containerPort: 8000

name: serve

workerGroupSpecs:

# the pod replicas in this group typed worker

- replicas: 1

minReplicas: 1

maxReplicas: 3

# logical group name, for this called small-group, also can be functional

groupName: worker

# The `rayStartParams` are used to configure the `ray start` command.

# See https://github.com/ray-project/kuberay/blob/master/docs/guidance/rayStartParams.md for the default settings of `rayStartParams` in KubeRay.

# See https://docs.ray.io/en/latest/cluster/cli.html#ray-start for all available options in `rayStartParams`.

rayStartParams: {}

#pod template

template:

spec:

containers:

- name: ray-worker # must consist of lower case alphanumeric characters or '-', and must start and end with an alphanumeric character (e.g. 'my-name', or '123-abc'

image: rayproject/ray-ml:2.6.3

lifecycle:

preStop:

exec:

command: ["/bin/sh", "-c", "ray stop"]

resources:

limits:

cpu: 1

memory: 1Gi

requests:

cpu: 1

memory: 1GiFor this, notice that need two things setup beforehand:

a GitHub repo that has the entire code for our app (you need to do this manually).

a kind cluster setup with kuberay operator installed (we did this earlier).

After we’re done, we can run the service:

$ kubectl apply -f serve_config.yamlWe’ll see that our app starts and using kubetcl get pods we get:

This process should take a few minutes and now we’re finally ready to test our app!

Testing our app

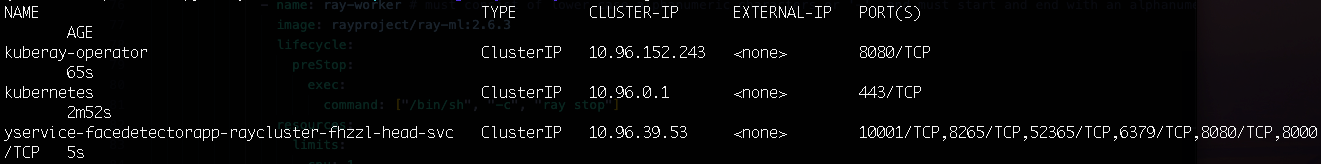

Get the name of the ray service pod using kubetcl get services:

Use the name of the Pod in the following command:

$ kubectl port-forward svc/yservice-facedetectorapp-raycluster-fhzzl-head-svc --address 0.0.0.0 8265:8265 Now, navigate to localhost:8265 to see the Ray dashboard. You’ll hopefully see the serve application in the “Running” state.

Once we’ve verified that, we can port forward our fastapi’s port 8000 to our localhost and test our app using a simple CURL or Python requests call:

$ kubectl port-forward svc/yservice-facedetectorapp-raycluster-fhzzl-head-svc --address 0.0.0.0 8000:8000Now, either navigate to localhost:8000/docs to use the API or simple use the following command to test using a sample image available in this tutorial’s repository:

import requests

image_path = "https://raw.githubusercontent.com/yashprakash13/face-detector-app/master/test_images/single.jpg"

api_endpoint = "http://localhost:8000/detect-face"

params = (

('img_path', image_path),

)

response = requests.post(api_endpoint, params=params)

print(response.text)You’ll see the list of coordinates of face(s) detected within the image as the output.

A Few Parting words

In this tutorial, we saw a straightforward guide on using Kubernetes to scale our ML app using Ray.

There are a few ways you can improve this app further:

use the Nginx Ingress with Kind cluster instead of manually port forwarding

Extend the API’s functions by providing more ML features like detection of facial features like eyes closed, specs, emotions, etc.

Explore how to deploy this app on a remote cluster using something like Google Cloud Kubernetes Engine (GKE).

The GitHub repo of this app is located here.

Happy learning!

Enjoyed this issue? Share it with a friend perhaps?