API performance is one of the crucial selling points of your service and it’s necessary to keep it up as you scale to millions of users.

If your API lacks critical efficiency, it can result in sluggish responses, periods of unavailability, and thus bad user experience.

This is where you need to improve the components of your API that make it robust.

In this article, we will be looking at some important techniques that can significantly improve the performance and usability of your API as you choose to scale.

Let’s dive in 👇

Starting out with HTTP/2

HTTP/2 has some significant performance improvements over HTTP/1.1 that gives developers some crucial data prioritization control over their API.

This means that the developers can choose in advance which page resources will load first, thus optimizing page performance.

There’s also the fact that HTTP/2 uses multiplexing which enables HTTP/2 to be able to use a single TCP connection to send multiple streams of data at once so that no one resource blocks any other resource. In contrast, HTTP/1.1 loads resources one after the other, so if one resource cannot be loaded, it blocks all the other resources behind it.

Using Connection pooling

Connection pooling refers to reusing and managing established database connections.

This eliminates the need for establishing a new connection for every API request. Thus, it drastically reduces the overhead associated with establishing connections.

For example, let’s consider an e-commerce API handling numerous concurrent requests for product information. Without connection pooling, each request would require establishing a new database connection, resulting in a high overhead and increased latency. However, by implementing connection pooling, the API can reuse existing connections from the pool, allowing it to handle a larger number of requests simultaneously, leading to faster response times and a more responsive user experience.

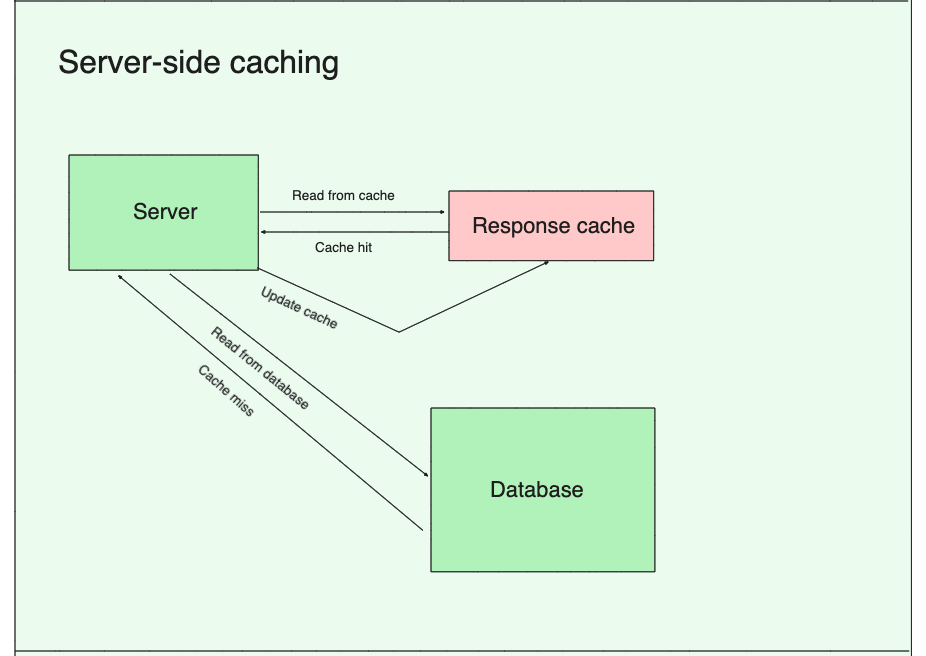

Server-side caching

Server-side caching is a powerful technique that significantly boosts API performance by storing frequently accessed data in memory on the server.

By caching API responses, subsequent identical requests can be served directly from the cache, eliminating the need for time-consuming data retrieval and processing.

For instance, imagine the same e-commerce API that serves product information to thousands of users simultaneously during peak shopping seasons. With server-side caching in place, the API can cache product details, pricing, and availability, ensuring that subsequent requests for the same products are served quickly from the cache. This reduces the load on the database and other backend systems, enabling the API to handle increased traffic efficiently.

Pagination for larger responses

Pagination helps by efficiently managing large datasets by reducing the amount of data transferred in each API response.

By dividing data into smaller, manageable chunks or pages, APIs can deliver faster response times and optimize resource usage.

Imagine a social media timeline page delivering recent posts to you. You’ll see that as you scroll down to the bottom, not all posts appear together, some take time to load. This is due to pagination.

Without pagination, retrieving all posts in a single request would result in a very large payload, leading to increased response times and potentially overwhelming the client app.

Asynchronous logging

Async logging is a powerful concept that significantly improves API performance by leveraging asynchronous processing to handle logging operations.

By decoupling the logging process from the main API execution flow, you minimize the impact on response times and overall system performance.

For example, consider a payment processing API that handles a high volume of transactions. Without async logging, each logging operation, such as recording transaction details or error messages, would introduce additional processing time and a huge burden on API performance.

Using PATCH instead of PUT

Using the PATCH method instead of PUT can significantly improve API performance by reducing the amount of data transferred and minimizing unnecessary updates.

PATCH allows clients to send only the modified fields or properties of a resource, rather than sending the entire resource representation as required by PUT. This results in smaller payload sizes and reduced network overhead, leading to faster response times.

For example, consider a customer management API where clients can update customer information. With PUT, clients would need to send the entire customer object, including the unchanged fields, for each update. However, by utilizing PATCH, clients can send only the modified fields thus reducing the amount of data transferred and the processing time on server-side.

That’s all for today!

If you enjoyed this issue, feel free to share it with a friend!